The Mind of a Machine

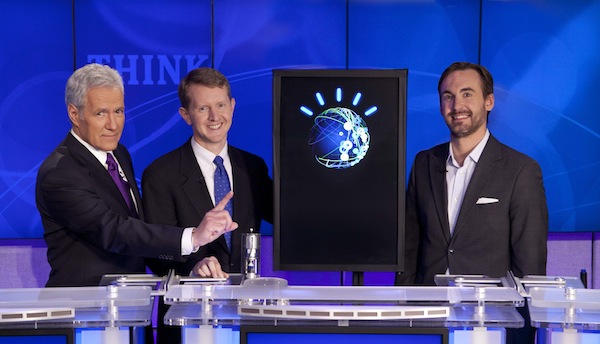

You’ve probably already heard much about IBM’s Watson supercomputer: the first machine to ever compete on Jeopardy and, quite possibly, the best natural language interpreter on the planet. While watching the IBM Challenge over the past 3 days, I was awestruck at just how far this team has come in understanding the nuances of the English language.

The machine seems to be able to parse through humor and misleading quotes to come up with answers that seem nearly impossible for statistical software to achieve. This is mostly due to a combination of deep analytics, natural language processing and machine learning.

I think this is a very timely challenge which parallels the work that a number of technology startups are doing. Here are two interesting companies to take a look at in the natural language and machine learning fields:

Siri

Siri is a natural language processing company that has received much attention over the last several years, especially following their purchase by Apple. Their “Virtual Personal Assistant” is astounding and it seems nearly certain that Apple will use this technology to create a much more advanced voice recognition system in future devices.

Wolfram Alpha

Wolfram Alpha uses structured data to answer questions. This is very different from the way that Watson comes up with its answers. Watson uses unstructured data, the same type as nearly all data available on the world wide web, and combines deep analytical capabilities with a host of other tools to organize and structure the data in order to rate a series of possible answers.

The future looks bright with the technology that Watson uses. A combination of powerful machine learning and natural language processing could allow computers to truly be used for analyzing the massive amounts of data we are producing each and every day. We have so much data that, were it to be burned onto CDs and stacked, would reach beyond the moon. Making since of this data is most certainly the intention of IBM’s research team.

Watson vs. Big Blue

Deep Blue is probably the best known super computer in the world. It’s competition with chess Grandmaster Gary Kasparov is the classic example of man vs. machine. However, Watson is certainly not just a more powerful Deep Blue and will hopefully not suffer the same fate.

Deep Blue used a simple recursive search which is well suited to computational modeling. By simply iterating through many possible chess moves in order to determine the best results, there is little way for a human to come out on top. This can be visualized by the tree shown above.

On the other hand, Watson uses many different techniques to come up with the best possible answer, perhaps the most important of which is Bayesian logic. Bayesian logic is the use of past events to predict similar events in the future. A good example of this are advanced spam filters which must constantly change to adapt to changes in spam messaging. Obviously, spam filters have a much less advanced implementation of Bayesian logic than a system like Watson would employ and doesn’t combine the other artificial intelligence methods.

We should all hope that Watson does not suffer the same fate as Deep Blue. Shortly after the Kasparov competition, IBM shuttered the project and discontinued work on the computer system. There is much more promising future in Watson and it is likely that IBM will continue to invest in its future. Even if they do not, the startups listed above and many others like them will continue pushing forward in this arena.

NOTE: See all of the moves from the Deep Blue vs. Kasparov competition.

AI & Watson

Leading futurist and artificial intelligence author Ray Kurzweil recently published an interview with Eric Brown, a research manager on the Watson project. Brown mentions some details about Watson’s future. Most interesting to me is his proposition of using Watson’s DeepQA technology to assist in technology support situations. Because of its ability to go through documentations, manuals, past issues, and known bugs, problems could be solved much more quickly without human biases and inconsistencies.

It is interesting that Brown specifically mentions that Watson’s analytics processes are run in a single-threaded model. Kurzweil maintains in “The Singularity is Near” that the key to the brain’s pattern-recognition abilities is the massive parallelism provided by the vast neural network. Watson’s hardware allows processes to be run in parallel with multi-core processors and a large network of many computers on which to run work simultaneously.

When most people think of testing a machine’s humanness they think of the Turing Test which essentially measures the machines ability to imitate human actions. However, the Feigenbaum Test might be much more important in advancing artificial intelligence. The test measures whether or not the computer can pass as an expert in a field. Machines capable of passing the Feigenbaum test would probably be capable of improving their own designs, making them much more important in advancing themselves.

Kurzweil says “that the human brain can master about 100,000 concepts in a domain.” In order to become an expert in a field, this would be the required number of concepts that the computer would need to fully understand. Because of this limit and the narrow amount of information that would need to be collected about a specific subject, the Feigenbaum Test may in fact be much more achievable than Turing’s Test.

If you’re worried about the future human vs. machine war, Kurzweil says you’ll have nothing to worry about. He posits that humans and machine will and have already started to merge. The “Singularity”, as the merger is known, will simply be another evolutionary step; nothing to be afraid of. As Ken Jennings said in his Final Jeopardy wager “I, for one, welcome our new computer overlords.”

UPDATE: Ken Jennings says that Watson had an unfair advantage. He says that the precision buzzing time of Watson gave it an unfair advantage. I certainly noticed this happening when Ken seemed very frustrated when he obviously knew the answer but was unable to buzz in.